Using Azure Storage Replication with SoftNAS®

Overview

This document covers the topic of using Azure Storage Replication options with SoftNAS. The intent of this document is to provide a clearer understanding of the interactions of SoftNAS and GRS/RA-GRS Azure storage. GRS/RA-GRS storage replication options are supported by SoftNAS and the below describes the scope of interaction between the two to better educate readers on this topic.

Azure Storage Replication

Azure offers 4 types of storage replication. You can find more detail on this topic as part of the link on Azure's website, but the below provides a high-level overview:

Data associated with an Azure Storage Account is always replicated to help prevent data loss from a hardware disk failure. How many times the data is replicated and where the replicated copies of the data are stored are the variables.

*SoftNAS simply sees Azure storage regardless of the selected replication option. The data replication process occurs outside of the scope of SoftNAS.

**Performance differences may occur based on the replication option selected. But in all cases, SoftNAS will receive acknowledgment of a write operation to Azure storage once the synchronous replication of data is completed at the local Azure node. Replication of the data to other datacenters (ZRS, GRS and RA-GRS) occurs asynchronously under the full control of Azure.

Replication strategy | LRS | ZRS | GRS | RA-GRS |

|---|---|---|---|---|

Data is replicated across multiple datacenters. | No | Yes | Yes | Yes |

Data can be read from a secondary location as well as the primary location. | No | No | No | Yes |

Number of copies of data maintained on separate nodes. | 3 | 3 | 6 | 6 |

SoftNAS SNAP HA™ with LRS, ZRS, GRS or RA-GRS

As mentioned in the bullets above, regardless of the type of Azure storage replication selected by the user, SoftNAS simply interfaces with an Azure Storage Account. SoftNAS is abstracted from any Azure Storage Replication operations that Azure performs on the storage, whether that be all to a local storage node, or replicated across Azure regions.

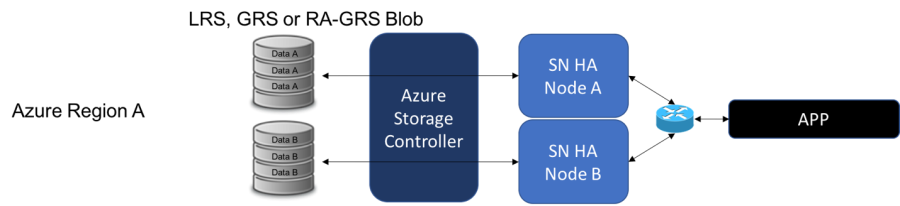

The diagram below represents SoftNAS scope of Azure storage in a SNAP HA™ configuration regardless of the replication option selected. All normal SNAP HA™ requirements and best practices apply, including HA pair of nodes being in an Availability Zone in the same Azure datacenter hardware cluster. SNAP HA™ cannot be split across regions if cross-region replication is done by Azure on the backend.

Azure Storage Replication with SoftNAS

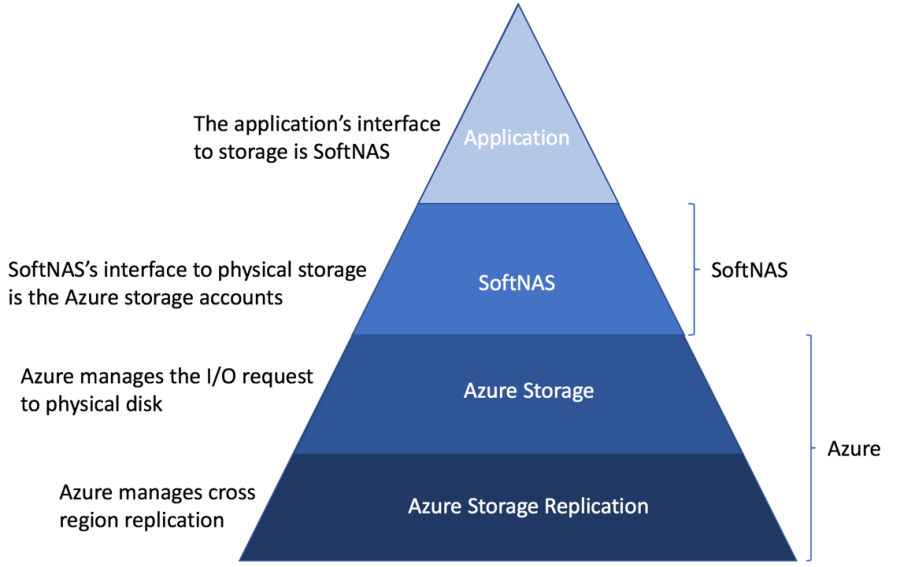

SoftNAS, like any other application leveraging Azure data from a storage account, does not need to change code to leverage the Disaster Recovery (DR) benefits that Azure Storage Replication provides. Think of Azure Storage Replication as a lower level of the Azure storage stack, and SoftNAS sits 2 levels higher in the stack. Operations performed at the Azure Storage Replication level are abstracted from SoftNAS. SoftNAS is not aware the replication is occurring.

Azure Storage Replication is an Azure feature, not a SoftNAS feature. SoftNAS has not performed formal testing to verify that after an Azure geo-failover event, SoftNAS can be successfully reconnected to the data replicated to the recovery region. But, if the Azure geo-failover performs as documented, it should theoretically work, being a replicated copy of the data should exist as written to the original Azure storage. Azure clearly states that due to the asynchronous nature of the cross-region data replication, data loss is a possibility:

Since asynchronous replication involves a delay, in the event of a regional disaster it is possible that changes that have not yet been replicated to the secondary region will be lost if the data cannot be recovered from the primary region.

Given the nature of an Azure geo-failover (the region the data was originally written to is no longer accessible), SoftNAS has no option to recover any data that was lost during a geo-failover and the potential exists that when the customer connects SoftNAS to the data in the recovery region, it may or may not be complete.

SoftNAS SNAP HA™ with Azure Storage Replication

When a customer asks if SoftNAS/SNAP HA™ can be used with Azure Storage Replication the answer is yes. But it is important to define what "can be used with" means and does not mean.

What it does mean:

SoftNAS supports use of Azure storage options that support Azure Storage Replication (GRS/RA-GRS). The SoftNAS VMs do not know that Azure Storage Replication is asynchronously happening in the background. Use of GRS or RA-GRS Azure storage simply looks like LRS to SoftNAS. It is important to keep this context when speaking with a customer.

Due to the nature of the GRS and RA-GRS replication, there may be some performance differences, but as of this time, SoftNAS has not done any formal benchmarking of the differences based on Azure storage replication options.

What it does not mean:

Use of Azure Storage Replication (GRS/RA-GRS) does not provide the ability to split a SoftNAS HA pair across multiple Azure regions. All the existing requirements and best practices of deploying SNAP HA™ in a local Azure availability set still apply.

It is important to understand the level of separation between what SoftNAS does and what Azure does when discussing Azure Storage Replication.

SNAP HA™ is used for High Availability.

Azure Storage replication is used for Disaster Recovery.

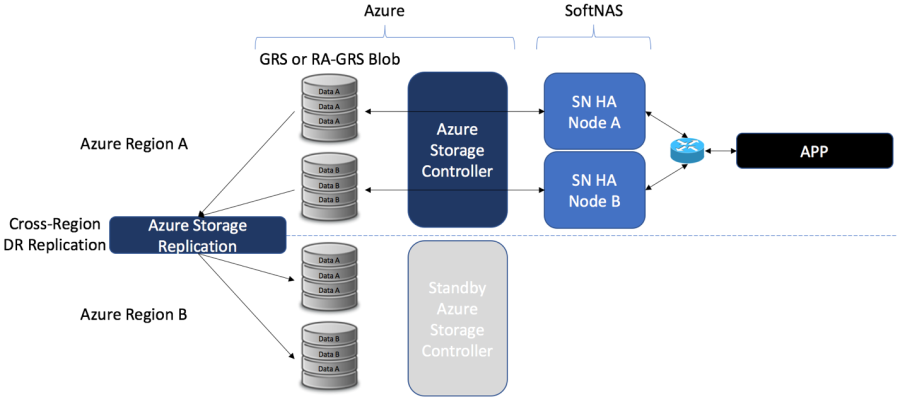

The below diagram represents a basic deployment of SoftNAS SNAP HA™ using Azure Storage Replication enabled storage. All the data written by SoftNAS Region A to Azure storage is replicated to Region B by Azure:

* Both SoftNAS SNAP HA™ nodes are in the same region in an Azure Availability Set.

**If HA was not in use, only a single Azure Storage Account would be replicated.

SoftNAS Data Recovery with Azure Storage Replication

The level of DR preparedness a customer chooses to deploy is at the customer's discretion. A customer has the option to deploy standby SoftNAS VMs, stopped and ready to be started in the event of an Azure geo-failover. But the process of fully configuring the SoftNAS VMs in Region B after an Azure geo-failover is a manual process that can only take place after Azure completes the geo-failover process. (note: the Azure geo-failover process is a manual process as well, and may take days to complete).

See the diagram on the right for a depiction of this standby deployment:

*The Standby SoftNAS HA Nodes are simply SoftNAS nodes that have been launched in Region B and stopped in preparation for an Azure geo-failover

**The Standby SoftNAS Nodes are not connected to any Azure Storage Account while in standby, being the Storage Account with the replicated data in Region B is not accessible until after Azure performs a geo-failover (RA-GRS may provide read-only access, but as of this time we are not sure if the storage account used for the read-only RA-GRS access will represent the same storage account that would be used during a post geo-failover event).

Expected steps to recover from a geo-failover with SoftNAS

(These steps have not been formally verified by SoftNAS)

After Azure has performed a geo-failover, the below is expected stated of the Azure Region B based on Azure documentation:

- No access to Azure Region A available.

- The data that has been replicated to Region B is now R/W accessible through the same Azure storage account that was used to connect to it in Region A.

- The data is no longer being replicated by Azure

Assumed SoftNAS steps after an Azure geo-failure:

- SoftNAS VMs would need to be launched in Region B, or the standby SoftNAS VMs started if they exist.

- The Azure storage that was replicated would need to be imported into the Region B SoftNAS VMs using the same Azure storage account.

- Any application that was previously accessing SoftNAS in Region B would need to have its DNS changed to now point to the SoftNAS servers in Region B.

- If the SoftNAS HA pair in Region A was in a failure state when Region A was lost, recovery options on Region B after the above steps could have complications, being Azure clearly indicates that data may have been lost during a geo-failover.